MSFS: Windows VR Tools

In this article we look at some of the components that contribute to the render pipeline for Microsoft Flight Simulator.

Page Contents

OpenXR Toolkit

This very effective app was initiated by M. Bucchia, it started off as the Image Scaling Tool and has been evolving rapidly since then.

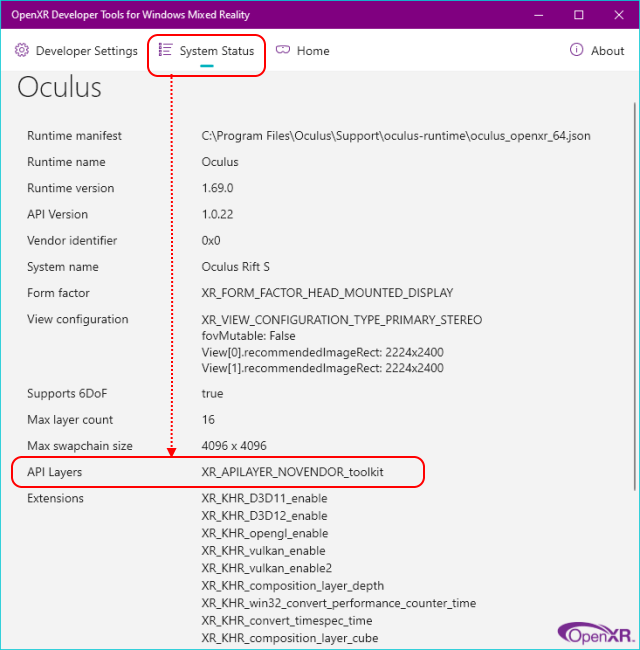

How to Confirm the Installation

Here is the OpenXR Developer Tool’s System Status tab after the OpenXR Toolkit has been installed. The API Layers heading at the bottom-left shows the toolkit has been added to the render chain.

How It Works

The de-facto explanation is on the OpenXR Toolkit website, but I like to make sure I understand these things by documentation – so this is my condensed version:

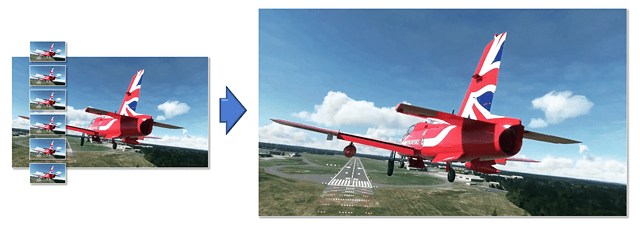

- The OpenXR toolkit inserts itself between MSFS and the OpenXR Runtime and then modifies information and data that is passing between them.

- MSFS receives camera, frame resolution and frame buffers from OpenXR that have been intercepted and modified by the OpenXR Toolkit.

- The toolkit receives the rendered frames back from MSFS, modifies the images as requested by the VR User and then passes them onto the OpenXR runtime.

- The OpenXR runtime passes the images to the headset driver and any other connected endpoints.

In this arrangement you can increase image quality by setting a larger than necessary image size on the headset but instruct MSFS to create a smaller source image via the OpenXR Toolkit upscaling menu.

Note: to keep things simple you should be sure set any other scaling factors to 100%.

An Overview of the Render Chain

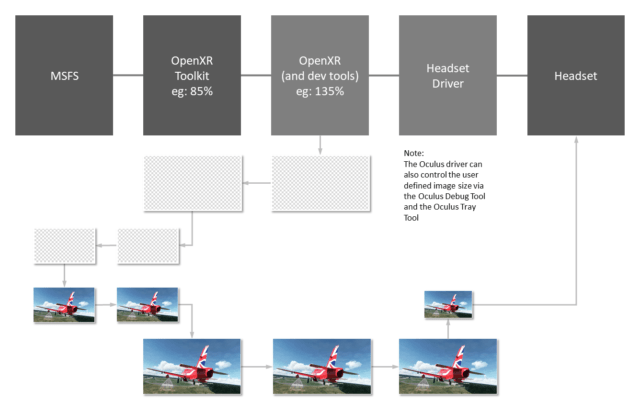

The following diagram shows the journey an image buffer takes as described by the OpenXR Toolkit developers (refer to Note 1 though).

Note 1:

I’m not sure where the original buffer is created. Since the Microsoft OpenXR & Dev Tools apps have an image scale on their UI it would make sense for those tools to create the buffer rather than the headset driver, but I don’t know. (let me know here).

Note 2:

An Oculus Rift & Quest headset owners might not use the OpenXR image scale slider. Their driver has a driver interface and a community tool (called OTT) to define the scale.

The Menus

Some of the menu items appear to depend on the headset in use. Mine doesn’t contain ASW Lock for example.

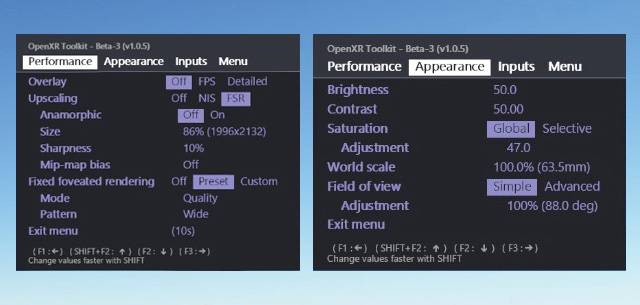

Overlay

An FPS counter or a more detailed display list placed in the centre of your view.

Upscaling

Images can be enlarged without apparent loss of fidelity using scaling algorithms referred to as upscalers. There are two types of upscalers available in the OpenXR Toolkit: NIS, FSR. CAS appears to be a sharpening filter although its algorithm can also cater for upscaling if enabled.

NIS and FSR both work on one frame at a time without reference to previous frames. To avoid confusion, set other scales in MSFS and OpenXR) to 100% before adjusting the scale in the OpenXR Toolkit. 100% represents the final image size, which you might choose to set larger than required by the headset for additional clarity. Try lowering the scaling value to something like 85% as a starting point. This means the image will be rendered at 85% of its required size and then upscaled to 100%.

The sharpening filter called CAS (Contrast Adaptive Sharpening) works well with the TAA antialiasing mode in the MSFS settings and seems to have been intended for use with TAA antialiasing. CAS applies a sharpening effect to areas of an image that are below a given threshold. In the OpenXR Toolkit, this is represented as a percentage with 0% = no sharpening.

CAS is an older algorithm than FSR that could also be used to sharpen low res/high FPS images. However, it does a good job of selectively sharpening image details without upscaling as well.

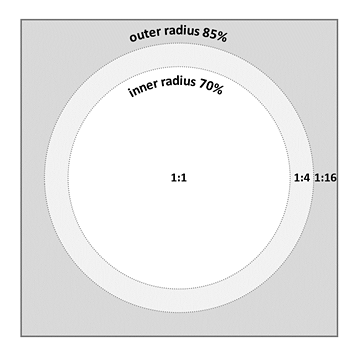

Fixed Foveated Rendering

The diagram shows a single display panel from a VR headset with fixed foveated rendering reducing GPU load and quality further from the centre of the screen, as defined by the OpenXR Toolkit.

The central region will be rendered using the fidelity you chose in MSFS and the OpenXR toolkit settings. The inner radius marks the transition to a lower rendering quality, as does the outer radius in turn.

There are presets and custom controls to allow the user to definer the their own preferences within the VR menu.

Temporal vs Spatial Upscaling Algorithms

The upscaling algorithms are becoming available and they make a significant difference. Here is a quick explanation of the two main types of upscaling algorithms available.

Spatial Upscaling

OpenXR Toolkit’s NIS and FSR upscalers are both spatial scaling algorithms. Spatial algorithms work on a single frame at a time without reference to any other and doesn’t use AI. The advantage of a spatial algorithm is that it requires less effort to enable it within a game and is almost as good as the temporal algorithm.

Temporal Upscaling

Asobo’s upcoming DLSS implementation is temporal and the upscaling uses AI. A temporal algorithm improves its output by using data from more than one frame at a time. The AI uses recent frames to infer detail and exclude artefacts, making a more stable, crisp and detailed image. The accuracy can be improved by replacing the trained AI networks with better models.

AMD are working on a temporal version of FSR that doesn’t use AI and it will be able to run on the same hardware that the current FSR can. The improvement made via this method is significant, and in turn this means the source image can be smaller for less of a load and higher frame rates.

Microsoft’s VR Tools

The Microsoft WMR App

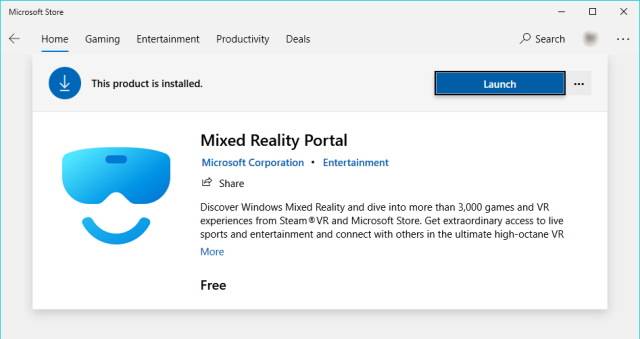

The Mixed Reality Portal app (a.k.a. WMR app) is a mandatory installation from the Microsoft Store that will offer a software interface to MSFS VR for SteamVR or the Oculus App etc. In my system, the WMR App does not recognise my Oculus headset but is still mandatory to make MSFS VR work.

The Microsoft OpenXR Developer Tools

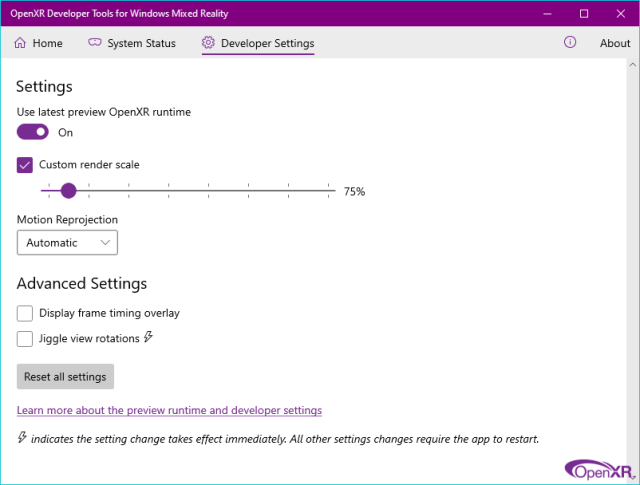

OpenXR is the companion app for WMR available from the Microsoft Store. Download it and enable “Use latest preview OpenXR runtime” to be up to date with the latest updates to WMR as they are released. The other settings in OpenXR do not seem to affect Oculus users, who should use the Oculus Tray Tool to switch on Reprojection (the feature that Oculus calls ASW).

Although you can alter the render scale as shown here, I have had screen-writes to my desktop display when changing the setting to 120%. With this poor level of bug testing I don’t trust it, and suggest you use the Oculus Tray Tool to apply your preferred render scaling instead.

Oculus App

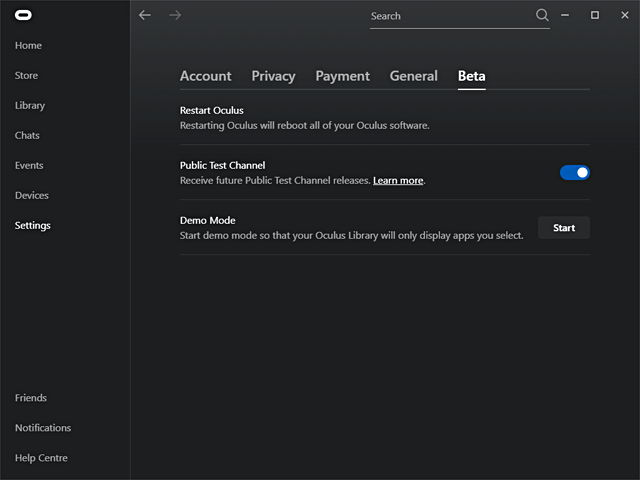

If you use Oculus you should enable the Public Test Channel to get the latest changes as they are available.

ASW/Reprojection

ASW and Reprojection are brand names for the same thing. ASW is short for Asynchronous Time Warp. This is one of the most important elements that can allow MSFS to run smoothly. It will increase the framerate by synthesising new frames from the latest available. It does this by using a well established technique that is faster than building the image from scratch. The cost may be an inaccuracy in the predicted movement that shows up as a smear around parts of the image where the depth contrast is high, for example propeller blades and wing struts. In those locations the background is significantly further away and the edges reveal the reprojection at work.

Tech Note:

ASW/Reprojection sits outside of the main render loop and just before the display refresh time is due, it takes a copy of the most recent frame and converts it into the next frame. It does this by taking into account the pixels, the depth position of the pixels (using the Z-buffer), and the current headset orientation. It also predicts where the headset will be looking by the time the frame manipulation is complete. The smaller the difference in time and space, the more accurate the prediction will be. The pixels in the image are shifted by a value derived from their position in the Z-buffer. This operation is much faster than generating a frame from the 3D models. Stuttering will only occur when the main thread is blocked as it loads files such as textures or ATC speech data, and Asobo will be offloading those duties to other cores and threads in the near future so things will only get better.

ASW/Reprojection Tools

ASW/Reprojection is explained in the settings section of this article if you want a quick overview of what it is about and how it works.

The OpenXR Tool

The OpenXR tool is the de-facto way to achieve Reprojection. Oculus provides the Oculus Debug Tool for its own brand of Reprojection named Asynchronous Time Warp (ASW), and the OTT tool provides a simple way to interface to it. I use a batch file to do the job which is self-contained but its not straightforward to figure out.

The Oculus Debug Tool

This can be found in your installation folders and it provides an interface to the Oculus service. The Oculus Tray Tool uses this executable in order to invoke changes to the system. I use it to set a render scale and ASW value.

The Oculus Tray Tool

The Oculus Tray Tool provides a user interface to the Oculus Debug Tool and extends its functionality by remembering your preferences. Although the OTT will apply your ASW/Reprojection setting when MSFS starts up, it doesn’t guard against them being altered afterwards. The Oculus app and MSFS may reset ASW to Auto when they begin. You can re-apply the ASW value at any time to ensure you are not using ‘auto’ accidentally.

OTT: Default Super Sampling Value

Super Sampling appears to be applied once when MSFS starts up. If you want to change it, restart MSFS when you have altered the setting.

Here is a trick some people used to use: you could try reducing the MSFS render scale to 50% and increasing OTT Super Sampling to 200%, it may give benefits. The OpenXR toolkit probably replaces this trick these days.

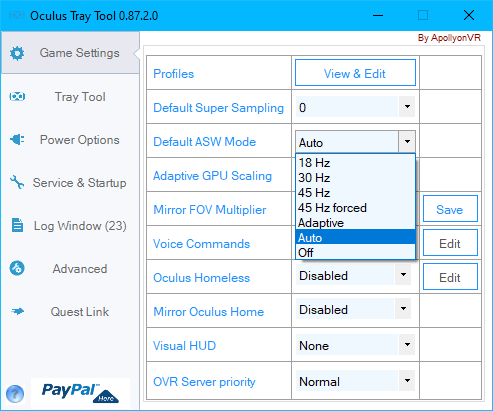

OTT: Default ASW Mode

I have been experimenting with the two options that don’t have an obvious place in the list as far as Flight Sims go: Adaptive and 45 Hz forced.

The ‘Adaptive’ option appears to be a way to modify median noise filtering if enabled (it doesn’t seem to be). It alters the way a noise filtering algorithm chooses its replacement pixel. The previously selected ASW mode will not be altered. If I’m wrong, let me know.

The ’45 Hz forced’ option appears to be provided so developers can stress test their code. Either way, I don’t think this option is useful for a flight sim user. If I’m wrong, let me know.

Therefore, I would say the valid options are:

- Off

- 18 Hz

- 30 Hz

- 45 Hz

- Auto (default)

Oculus Mirror & Image Scaling Issues

Overview: activating the oculus mirror before entering VR affects the image resolution.

Note: I use an oculus Rift S with a panel size of (2560 x 1440) split between each eye and this may affect the absolute numbers I see in my tests. Yours may differ.

The Render Scale setting will provide a scaling ratio for the source image size shown in the brackets on the same line. I have no information about why this particular image size has been picked, but it might be to do with managing barrel distortion due to the type of lenses used. Better lenses are more expensive but don’t require the distortion fix.

With my configuration settings fixed and a value of 100% on the Render Scale slider the source image size will change unexpectedly for my system like this:

- When going into VR without the Oculus Mirror running the source image size is set to (1648 x 1776) which I have optimised all my settings for.

- If I start the Oculus Mirror before I start MSFS then go into VR the source image size is set to (1824 x 1952) and this can causes problems.

- If I lower the scaling resolution so the source image size is closer to the non-mirror value, the quality of the image in VR is reduced by the same amount. This doesn’t make sense.

- Once the source image size is defined for VR, it doesn’t change until you quit MSFS to the desktop and start again.

- Tests on two different monitor sizes do not affect the VR source image size (just as they say).

- The number in the brackets does not relate to the display size in the VR headset in a meaningful way. I would expect that when the scaling ratio is set to 100% the source image size per eye would match the VR headset display resolution per eye.

Summary:

- In order to preserve normal operation, do not launch the Oculus Mirror (or similar) until after you have entered VR and the source image size has been set.

- The source image size is set by MSFS to values that I don’t have information on. It appears to be the VR size * oversample factor * offscreen buffer size factor

- The scale value you select is changing not only the size of the source image but also the size of the image that is transmitted to the headset as if there were an intermediary stage.

- The scaling value is not just the ratio between the source image and the VR display.